How long does it take to scan a spectrum?

There are several scan parameters that either directly or indirectly determine the time it takes to measure a spectrum and the quality of that spectrum. These parameters are wavelength range, the scan speed, the slit width, and the data point interval. These four parameters can be traded off to control a spectrum's resolution, noise, and the amount of time it takes to scan a single spectrum.

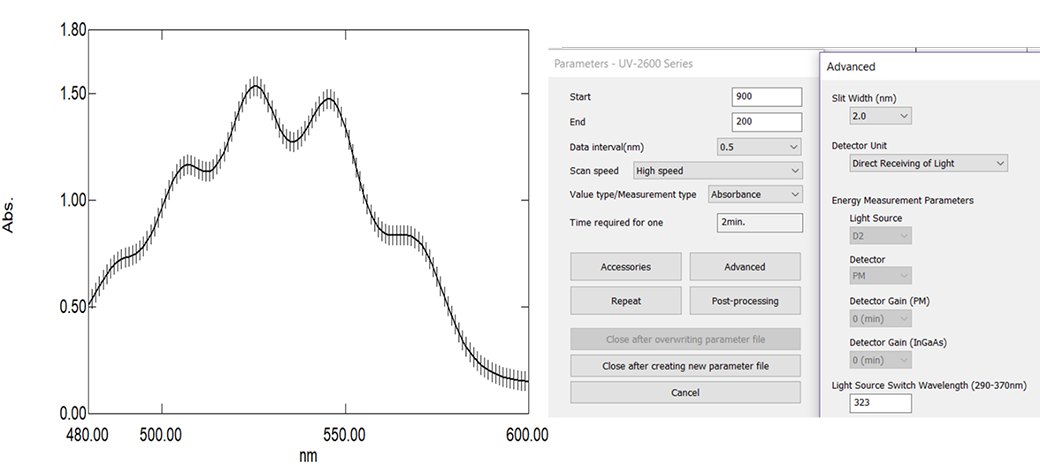

We have not yet addressed the parameter of data interval, which is how often you collect a wavelength/absorbance data pair as the instrument scans the wavelength. The data interval has an indirect effect on spectral resolution in that too few data points will cause the spectrum to be distorted, whereas too many data points will cause the spectrum to take a long time to scan. The data interval should always be less than the slit width setting. The rule of thumb is that you would like each peak comprised of at least 10 to 15 data points. Above is a spectrum that has five vibrational sub peaks. The vertical lines indicate where each data point was acquired. As you can see it meets the requirement of around 15 data points to “define” each peak. Too few data points would cause a lack of “data resolution”. This could result in a shifting of peak maximum absorbance values and a distortion of peak shape. Usually absorbance peaks of dissolved solids in solution are somewhat broad, therefore larger slit widths and data intervals can be employed to speed analysis.

At right is a picture of a Shimadzu UV-2600 instrument’s control panel. You would select the wavelength range, slit width, data interval, and scan speed, from which the software will calculate an estimated time required for one scan.